Newsletter Signup - Under Article / In Page

"*" indicates required fields

Integrating biology and robotics would allow robots to feel, smell, or hear in order to react to changing environments, opening a wide range of applications.

Our senses allow us to interact with our surroundings. They are also the basis for learning. In the quest to make machines more efficient, researchers are looking at one of the best qualities of living things: adaptability. The goal is to make robots able to face unexpected situations in the real world.

Our technologies can already sense the environment far beyond human capabilities, from atoms to the most distant stars. However, that kind of equipment often requires large installations and complex data interpretation. “Robot senses should be functional in human-scale mobile lightweight artifacts, operating in variable scenarios with affordable requirements in terms of computational power and energy supply,” said Calogero Maria Oddo, Head of the Neuro-Robotic Touch Laboratory at The BioRobotics Institute in Pisa, Italy.

To achieve this goal, scientists are getting inspiration from nature. In turn, new robotic components and systems may contribute to getting a deeper insight into how nature operates. “In traditional terms, the engineer invents whereas the scientist discovers, but biorobotics allows establishing fertile loops between discovery engines and technological developments,” Oddo noted.

Sensing touch and smell

Touch has been an essential sense for the survival of humans. It can allow us to understand and interact with the world as well as sense danger in the form of pain. In 2021, the Nobel Prize in Physiology or Medicine went to research into the molecular receptors that allow us to detect temperature and touch.

Oddo’s research group studies how our nervous system processes touch in order to replicate it in biorobotics systems. This could have applications such as restoring a certain degree of touch in amputees wearing prostheses or developing sensing robots that can be operated remotely.

A challenge to replicating the sense of touch is that it requires a large number of individual sensors. Researchers at Technische Universität München in Germany have developed an electronic skin composed of hexagonal sensing modules, which can be attached to a variety of surfaces. The system works with low power consumption, replicating a natural mechanism: instead of continuously sending information to the brain, human skin receptors remain inactive until they detect a change.

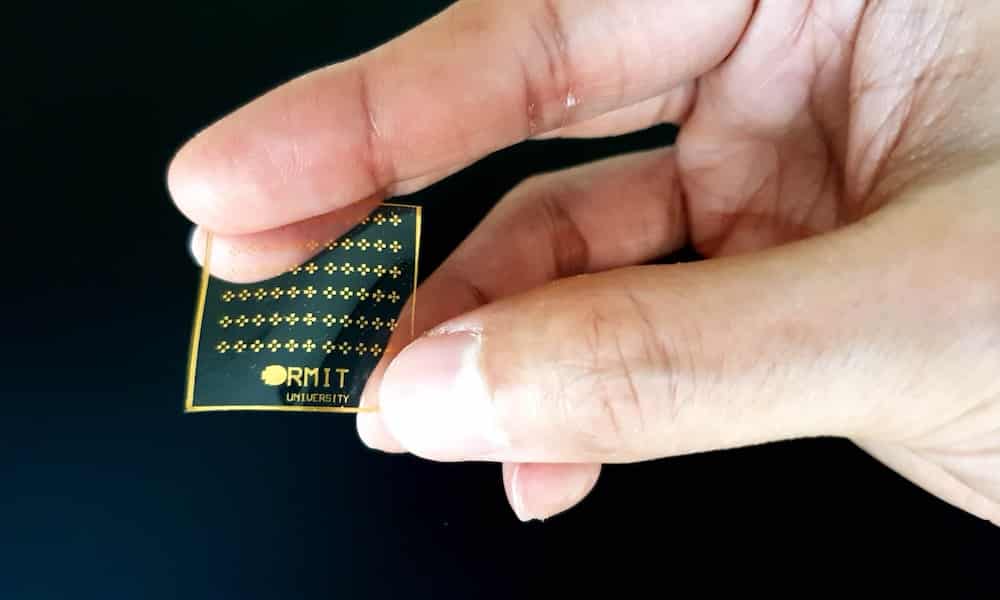

It also might be useful for robots to feel pain since undetected injuries could lead to serious accidents. To achieve this, a team at RMIT University in Melbourne, Australia, have developed an artificial skin that reacts to pain, mimicking the body’s ability to provide immediate feedback when pressure, heat, or cold reach a certain threshold.

In this path to endow robots with warning signals, another group of researchers at the Chinese University of Hong Kong have created an artificial skin that can change colors to simulate bruising. They achieved this using a molecule, called spiropyran, that changes color from pale yellow to bluish-purple under mechanical stress.

Scientists still need to better understand our sensory systems to reproduce them with biorobotics. Probably smell is the least understood. This system is so complex that scientists are still unable to predict how a specific odor will be interpreted by our brain. Still, researchers are trying to recreate it in robots

Scientists at the CEA Tech institute in France have developed an artificial nose and integrated it into a robot that can detect survivors in rescue operations. The nose was made using biosensors with odor-binding proteins. It can smell a human victim beneath rubble and indicate whether the person is still alive.

Artificial olfactory systems could be very useful for smell evaluation in the food and perfume industries, since they can be more precise than humans. Animals such as dogs can also perform this task, but require extensive and expensive training.

A research team at the Hebrew University of Jerusalem has created an optical nose that can sense smells using carbon nanotubes. The device uses machine learning to detect unique smell patterns and distinguish among the aromas of red wine, beer, and vodka among others.

Robotic super senses

In recent years, scientists have been using biological components to enhance robots’ abilities to interact with humans. According to Oddo, bio-hybrid components can replicate useful traits from biology, such self-healing, replicating the structure of neuronal connections, or finding their way through our body.

For example, a research team at Duke University, US, has designed a dragonfly-like robot with wings made of a self-healing hydrogel. Changes in the surrounding pH make the hydrogel break or heal, making it move away from acidic environments. This lets the robot find oil spills and clean the contaminant by soaking it up with sponges the robot carries under its wings.

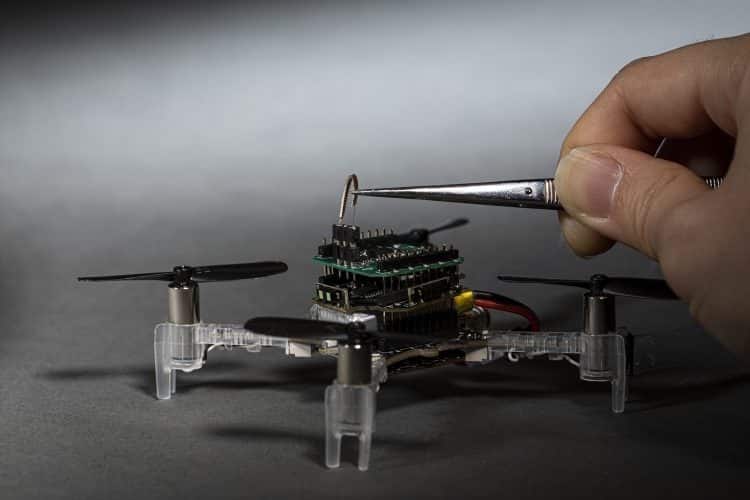

Another curious example of biorobotics is the smellicopter, an autonomous drone that can avoid obstacles using a live antenna from a moth to navigate toward smells. Developed by researchers at the University of Washington, this robot could be used to access dangerous places to sniff out gas leaks, explosives, or survivors.

“Cells in a moth antenna amplify chemical signals,” stated Professor Thomas Daniel. “The moths do it really efficiently — one scent molecule can trigger lots of cellular responses, and that’s the trick. This process is super efficient, specific, and fast.”

This approach could also be used to replicate the sense of hearing. Scientists from Tel Aviv University have connected a locust’s ear to a robot, getting advantage of this insect’s ability to detect sound. When the researchers clap once, the locust’s ear reacts to the sound and the robot moves forward; when the researchers clap twice, the robot moves backwards.

Oddo’s research group is investigating the use of human skin cells such as fibroblasts and ciliate cells to develop skin devices sensitive to touch. However, further research is needed. “Engineering artifacts with cultured cells has technical complexities, for example related to viability and biosafety, that should be solved before such technologies have application in real operational scenarios,” added Oddo.

Nature is crowded with information. However, our senses can grasp just a tiny part of it. “There is a lot going on in our world that our own human sensors don’t sense, such as magnetic fields, electromagnetic signals beyond the visual spectrum, very low and very high acoustic waves beyond our auditory capacity, and more. There are countless examples of animals sensing things we don’t,” said Bradley Nelson, Professor of Robotics and Intelligent Systems at ETH Zürich in Switzerland.

For instance, some animals have amazing abilities to detect explosives, drugs, or diseases. Others can sense earthquakes or sonar signals. Researchers from MIT have already created a robot that uses radio waves to locate and grasp objects hidden from view.

“All areas of sensing need to be improved,” said Nelson, who believes that artificial intelligence will be essential to reach superior perception in biorobotics in order to make decisions on how to respond to the environment.

“Force sensing is important for physical interaction in the world, but smart skin still needs a lot of work. Computer vision has made recent progress with improvements in object recognition due to deep learning, but actually understanding an image’s ‘story’ is still rudimentary. Speech recognition has progressed, but we all know how challenging it is in noisy environments.”

The boundaries between machines and living creatures are breaking down. “In my opinion, future biorobotics systems will further advance the current idea of artificial intelligence. Computational functions will no more be the result of a series of operations, but of an architectural design and connectivity among all involved subsystems, including sensors and effectors,” said Oddo.

Cover illustration by Anastasiia Slynko. Images provided by RMIT University and University of Washington.